The world of artificial intelligence (AI) has been witnessing remarkable advancements with cutting-edge language models like Auto-GPT and Jarvis. These sophisticated tools are transforming the AI landscape, sparking interesting discussions on their capabilities and potential applications. 🤖

Auto-GPT is an autonomous agent building on OpenAI’s large language model (LLM). Meanwhile, Jarvis, inspired by Iron Man’s ever-loyal virtual assistant, aims to bring a new level of AI-powered assistance to users.

Auto-GPT vs Jarvis: Overview

Both are powerful AI systems for various tasks and applications, making them essential tools for developers and researchers alike. 👨💻

Auto-GPT 🤖

Auto-GPT is an experimental open-source project that aims to make the GPT-4 model fully autonomous. It serves as a showcase of the possibilities AI can achieve with minimal human intervention, potentially generating content and taking actions surpassing real-world business practices or legal requirements.

🔗 Recommended: Setting Up Auto-GPT Any Other Way is Dangerous!

Auto-GPT can be easily extended with custom tooling functionality and has a growing community of users. The project works well not only with GPT-3.5 but also with GPT-4, according to some users.

Jarvis 💡

Jarvis is a project developed by Microsoft to connect Language Models with Machine Learning applications. It provides a robust system for developers to build and integrate AI-driven solutions with ease. Jarvis supports major AI services, such as the OpenAI platform and the GPT-4 model.

Image Credits: GitHub

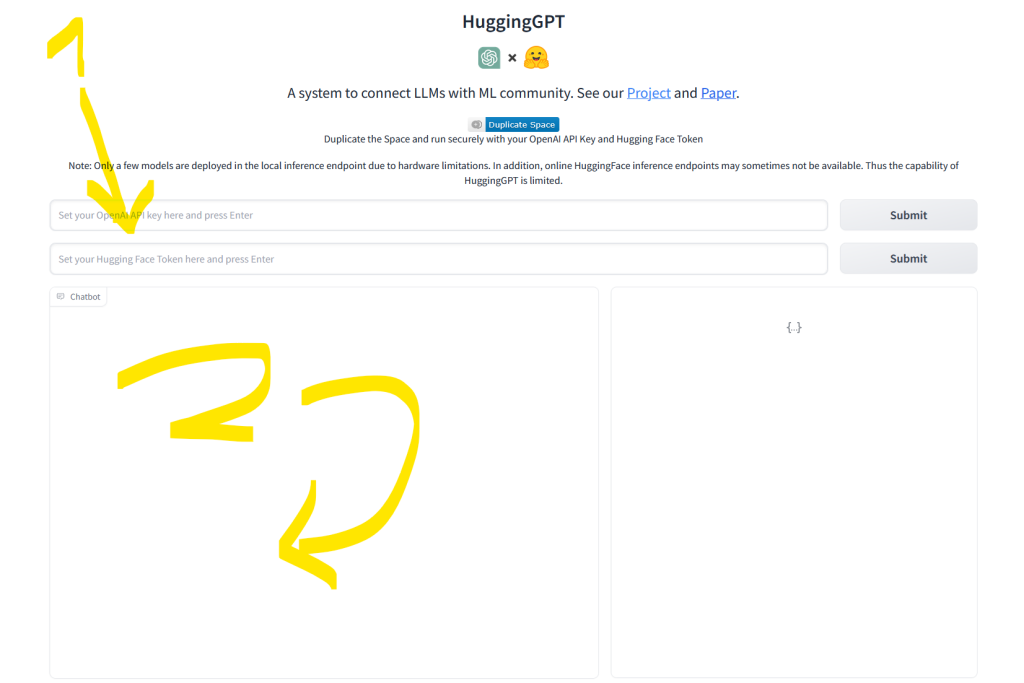

The platform provides comprehensive documentation and resources for developers to get started, as well as a Gradio demo hosted on Hugging Face Space, allowing users to easily interact with the AI and explore its potential applications.

TLDR; Auto-GPT explores the full autonomy of AI models, while Jarvis provides a versatile platform for developers to create AI-driven applications with popular Language Models, such as GPT-4. Both projects are valuable resources for anyone looking to leverage the power of AI. 🚀

| Criteria | Auto-GPT | JARVIS (HuggingGPT) |

|---|---|---|

| Architecture | GPT-3 and GPT-4 | Combination of ChatGPT, LLM, DALL-E, and GPT-4 |

| Developer | OpenAI | Microsoft |

| Goal | Generate high-quality natural language text | Bring together multiple language understanding models (image, text, audio generation) |

| API Key | Required | Required |

| Token Validation | Not required | Required |

| Access to Internet | Required | Required |

| Available in HuggingFace | Not by original author | Yes |

| Access to other language models | No | Included |

| Availability | Public (GitHub) | Public (GitHub) |

| Training Data Cutoff | September 2021 | Depends on model |

Sources see below.

Comparison of Key Features

Let’s compare the key features of Auto-GPT and Jarvis, two state-of-the-art AI technologies developed by OpenAI and Microsoft, respectively.

Language Models

Auto-GPT is an AI language model developed by OpenAI based on the GPT-3 architecture. It aims to generate high-quality natural language text. On the other hand, Jarvis is a unified AI bot created by Microsoft that brings together multiple language understanding models, such as ChatGPT, LLM, and GPT-4 (source).

Here’s an example screenshot of Jarvis, aka HuggingGPT, on Huggingface:

Performance

While specific performance metrics are not available for Auto-GPT, it leverages the advanced GPT architecture by OpenAI to generate impressive natural language outputs. Jarvis, as a unified platform, combines various models like ChatGPT to offer a seamless and highly efficient natural language understanding and generation experience.

🖥️ Jarvis

Ease of Use

Auto-GPT can be accessed using an OpenAI API key, making it relatively easy to integrate into projects. Jarvis, being a part of Microsoft’s ecosystem, offers smooth integration with existing tools and services, providing a familiar environment for developers.

Personally, I found Jarvis on Huggingface is really easy to use compared to installing Auto-GPT with Dockers, which can be a pain.

With these key features in mind, Auto-GPT and Jarvis exhibit different strengths in terms of language models, performance, and ease of use.

Popular Use Cases

In this section, we’ll explore popular use cases for Auto-GPT and Jarvis, covering the following topics: Chatbots and AI Agents, Generative AI, and Machine Learning Classification.

Chatbots and AI Agents

Auto-GPT and Jarvis are both highly versatile and can be effectively utilized for creating chatbots and AI agents 🤖. Their natural language understanding capabilities make it possible for developers to craft conversational interfaces that are responsive and engaging. Powered by Hugging Face, Auto-GPT benefits from open-source resources, making it an accessible option for developers.

Generative AI

Auto-GPT, based on the GPT-3 architecture, excels in generating high-quality natural language content 📝. It can be employed for various tasks, such as creating articles, poems, or stories, and drafting emails source. By leveraging Auto-GPT’s generative properties, developers can create unique content and automate text generation across various domains.

Machine Learning Classification

Both Auto-GPT and Jarvis can be effectively used for machine learning classification tasks 🔍. These AI-powered models can sift through vast amounts of data and recognize patterns, enabling them to classify different entities.

Their robustness and adaptability allow them to function across several use cases, such as analyzing text or images, sentiment analysis, or recommending relevant items. With Python as a popular language for machine learning developers, it’s easy to integrate these AI models into various projects.

These use cases demonstrate the versatility and practical applications of Auto-GPT and Jarvis in the ever-evolving world of artificial intelligence.

Supported Technologies and Frameworks

In the race for AI supremacy between Auto-GPT and Jarvis, it’s essential to explore the technologies and frameworks employed by both tools. This section highlights the various frameworks, libraries, and platforms used by Auto-GPT and Jarvis, enabling better understanding and comparison.

Frameworks and Libraries 📘

Auto-GPT, a language model developed by OpenAI, is based on the GPT-3 architecture. It is a state-of-the-art AI system for generating high-quality natural language content. Meanwhile, Jarvis is an AI bot created by Microsoft that consolidates several AI services into one tool.

- Auto-GPT benefits from the foundation of OpenAI’s GPT architecture, providing impressive language generation capabilities.

- Jarvis relies on Microsoft’s AI infrastructure, integrating various services into one user-friendly interface.

HuggingFace, a popular framework for natural language processing, likely influences both Auto-GPT and Jarvis. HuggingFace is notably adaptable to different language models and backend engines, making it a common choice for AI developers.

In fact, when running Jarvis (HuggingGPT) on Huggingface I realized that it can also create audio files. See here:

I haven’t seen Auto-GPT creating multimedia outputs, so this is a lovely demonstration of the potential expressiveness of Jarvis. Anyway, the resulting audio didn’t really say anything valuable, so more work is definitely needed!

Platforms 🖥️

Both Auto-GPT and Jarvis are available on different platforms, enabling users to integrate these AI tools into their workflows. Auto-GPT, being developed by OpenAI, primarily functions through its command-line interface (CLI) for users to access and interact with the model.

On the other hand, Jarvis is a part of Microsoft’s AI ecosystem and can be integrated with various Microsoft services and platforms (source). NVIDIA, a leader in AI hardware and development, also plays a significant role in Jarvis, providing powerful GPU acceleration for optimized performance.

TLDR; Auto-GPT and Jarvis have extensive backing from major players in the AI industry, with OpenAI supporting Auto-GPT, and Microsoft and NVIDIA working together on Jarvis. These technologies and platforms significantly contribute to the capabilities and flexibility offered by both tools.

Installation and Setup

Next, I’ll guide you through the process of implementing Auto-GPT and Jarvis. Both of these AI technologies can power chatbots, assist with research, and perform various natural language processing tasks.

Keep in mind that while comparing AI technologies can feel like comparing 🍎 to 🍊, understanding their usage will help you make an informed decision.

Auto-GPT

Auto-GPT is developed by OpenAI and is based on the GPT-3 architecture. To use Auto-GPT, you’ll need access to the OpenAI API. Once you have your API key, you can follow the documentation provided by 💻 OpenAI to set up authentication and make API calls to perform tasks like text generation or inference.

After you’ve created your OpenAI token (which you’ll need for both Auto-GPT and Jarvis), follow my detailed installation instructions on the Finxter blog: 👇

🔗 Recommended: Setting Up Auto-GPT Any Other Way is Dangerous!

Jarvis

Jarvis is a AI assistant developed by Microsoft. To get started with Microsoft Jarvis, follow these steps:

- Sign up for an API key from OpenAI, as described in the Auto-GPT section above.

- Get your Huggingface token by following the steps in this guide.

- Set up the authentication, using the instructions provided in the 💼 Beebom article that shows you how to configure Jarvis with the API keys.

Here you can see the Huggingface token in my case:

Community and Growth

Both Auto-GPT and Jarvis have attracted attention and engagement within the AI developer community. This section examines their popularity and developmental activity while highlighting key aspects, such as dependencies and API keys.

Popularity

Auto-GPT, in collaboration with open-source projects like Hugging Face, has caught the interest of developers. Its integration with Linux systems and flexible API key access fosters user adoption.

Comparatively, Jarvis also appeals to developers, being featured in the Top 5% of largest communities on Reddit. The platform provides powerful AI capabilities similar to Auto-GPT, inspiring numerous discussions and comparisons.

If you need a more quantitative measure of popularity, consider the GitHub stars of JARVIS (20k) and Auto-GPT (135k) making Auto-GPT seven times as popular!

Developer Activity

Auto-GPT’s developer activity is notable, primarily due to its ability to create 🔥 autonomous AI agents within various applications. Continuous updates and experiments contribute to its growing knowledge-base and expanding community of collaborators.

Jarvis, on the other hand, boasts a vibrant GitHub presence, with insights on LibHunt illustrating the project’s growth in stars and recent commits. Its well-organized codebase and active maintenance encourage adoption by a broad range of developers.

Criticisms and Potential Issues

Limitations

🤖 Auto-GPT and Jarvis, while impressive, face limitations that can hinder their performance. Built on technologies like GPT-4 and GPT-3.5, these AI agents excel in generating text but can struggle with understanding context or specifics. As a result, they might produce creative yet irrelevant outputs.

💡 Integration with varying platforms and other AI tools is crucial. Technologies like AgentGPT and generative AI can complement AI agents, yet their interoperability might be limited. Users should be aware of compatibility when using AI systems simultaneously.

✍️ Auto-generated content lacks a human touch. While shortening text á la Hemingway is doable, capturing the unique expressions and nuances a human writer offers proves challenging for AI.

Controversies

❗️ Ethics concerns arise in the world of AI agents. Auto-GPT may unintentionally generate inappropriate, biased, or misleading content due to its vast yet potentially outdated knowledge base. Users and developers should exercise caution and have checks in place to mitigate harmful outputs.

🔒 Security remains a pressing issue. AI services like GPT4All may be vulnerable to cyber-attacks and data breaches, so ensuring a secure environment for users is vital.

🌎 The widespread adoption of Auto-GPT and Jarvis raises questions about their impact on employment, as they automate traditionally manual tasks. Society must weigh the benefits of increased productivity against potential job displacement, and plan accordingly.

Future Developments

🚀 The potential of AI technologies like Auto-GPT and Jarvis is astounding, as both platforms continue to evolve in the realms of natural language processing and task automation. In this section, we will explore how these technologies may integrate with various tools and environments like Ubuntu, PyTorch, and CUDA for more efficient performance.

Ubuntu Integration

Auto-GPT and Jarvis can possibly be integrated with the popular Linux distribution, Ubuntu, to provide a seamless user experience. Ubuntu’s robust software ecosystem could enable developers to harness the power of these AI technologies more readily, enhancing the capabilities of chatbots and virtual assistants.

PyTorch and Torchaudio

Both Auto-GPT and Jarvis can potentially be expanded with libraries like PyTorch and torchaudio that aid machine learning and audio processing, respectively. These open-source libraries can help developers to build more sophisticated AI models, allowing the chatbots to analyze and process complex data.

CUDA Acceleration

The advanced computing prowess of AI technology can potentially be further boosted using CUDA, NVIDIA’s parallel computing platform. Utilizing CUDA’s GPU acceleration, both Auto-GPT and Jarvis can rapidly execute computational tasks, thus improving processing speeds and performance.

| Technology | Application |

|---|---|

| Ubuntu | Seamless user experience |

| PyTorch | Advanced machine learning |

| Torchaudio | Audio processing |

| CUDA | GPU-accelerated computation |

Demo Scripts

Developers might create demo scripts like run_gradio_demo.py to showcase the capabilities of Auto-GPT and Jarvis. These demo scripts can act as a practical introduction for users, highlighting the impressive language model-driven abilities of the chatbots to carry out complex tasks.

Integration with CNNs

In the future, it is plausible that Auto-GPT and Jarvis will incorporate convolutional neural networks (CNNs) for tasks like image analysis and recognition. With CNN integration, these AI technologies can potentially become more versatile and useful in a wider range of applications.

In summary, the developments for both Auto-GPT and Jarvis will likely involve integration with popular tools and libraries, enabling these technologies to further excel in the field of AI and push the boundaries of what is possible.

Alternative AI Models

In the world of artificial intelligence, AutoGPT and Jarvis are not the only game-changers. This section explores alternative AI models, focusing on Facebook’s Llama model and other notable competitors, and how they tackle various tasks such as entertainment, image recognition, machine learning, and classification.

🔗 Recommended: Choose the Best Open-Source LLM with This Powerful Tool

Facebook’s Llama Model

Facebook’s Llama model is gaining recognition for its impressive capabilities. 😮 It excels at tasks like machine learning and classification, allowing it to easily analyze vast amounts of data quickly and accurately.

Furthermore, Llama’s image recognition prowess makes it a strong contender in the AI race, as it can efficiently sift through images and identify relevant features.

Other Notable Competitors

Several other AI models are pushing boundaries in the industry:

- OpenAI’s CLIP combines text inputs and image recognition to create a versatile and powerful AI model that excels at tasks like generating content and image analysis.

- Google’s BERT focuses on natural language processing (NLP), enabling it to analyze and understand a wide range of linguistic content, from simple questions to complex sentences.

- NVIDIA’s GauGAN is designed for artistic creativity, transforming simple drawings into realistic images and digital art using machine learning techniques. 🎨

These alternative models demonstrate the growing diversity and expanding capabilities of artificial intelligence across various sectors, from entertainment to image analysis. By leveraging their strengths, businesses and researchers can harness the power of AI to solve critical problems and drive innovation.

💡 Recommended: 11 Best ChatGPT Alternatives

OpenAI Glossary Cheat Sheet (100% Free PDF Download) 👇

Finally, check out our free cheat sheet on OpenAI terminology, many Finxters have told me they love it! ♥️

💡 Recommended: OpenAI Terminology Cheat Sheet (Free Download PDF)