When it comes to cutting-edge natural language processing technology, Auto-GPT and LangChain are two popular tools that help users tackle a variety of tasks. 🤖

💡 Auto-GPT is a sophisticated AI agent, powered by the formidable GPT-4 and GPT-3.5 engines. It is designed to provide targeted, goal-oriented solutions by segmenting larger objectives, like “establishing an online business”, into a sequence of manageable, smaller subtasks, such as “creating a Twitter account and publishing relevant content”. Then, with remarkable autonomy, it tirelessly executes these subtasks – either indefinitely or until you choose to halt it.

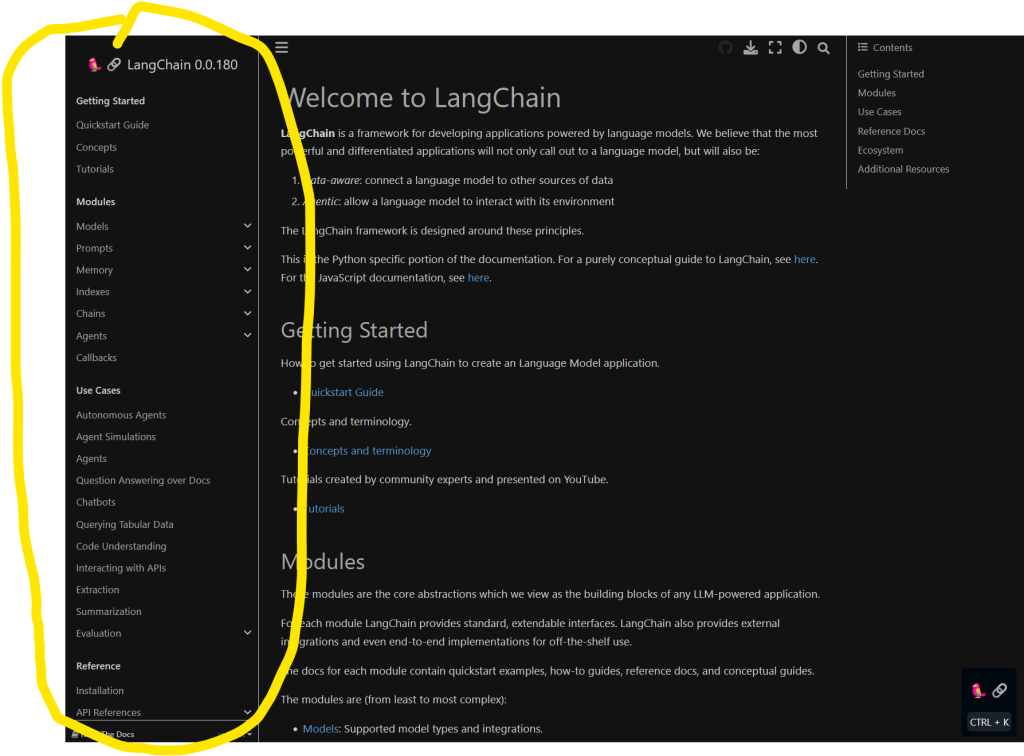

🎯 LangChain is a toolkit that leverages various Language Models (mainly LLMs) and utility packages to create versatile, customized applications. As a language model integration framework, LangChain’s application sphere mirrors the vast utility of language models at large including tasks like dissecting and summarizing documents, powering chatbots, and performing intricate code analysis.

The main difference between these two tools lies in their core functionalities. Auto-GPT is renowned for getting caught in logic loops and “rabbit holes”, limiting its problem-solving capacity in certain situations. LangChain is not an autonomous agent but more like an LLM library that helps you develop all kinds of applications on top of state-of-the-art LLMs.

Image source: this awesome article kicked Auto-GPT off

Auto-GPT vs Langchain: Core Concepts

What Is Auto-GPT? 🤖

Auto-GPT is an open-source project that transforms GPT-4 into a fully autonomous chatbot. It aims to autonomously achieve goals by running OpenAI’s model on its own. This AI application can automate multi-step tasks, chaining together “thoughts” to create its own prompts.

With this app, users can experience advanced automation in various tasks and processes. Auto-GPT’s main strengths lie in its ability to adapt and learn, making it a useful tool for businesses and individuals alike. However, it has been noted that Auto-GPT may sometimes get stuck in logic loops, repeat steps, or go down “rabbit holes” (GitHub discussion).

I’ve personally experienced multiple instances of such an infinite loop where Auto-GPT researches stuff forever and never produces any meaningful result. More work needed!

💡 Recommended: 30 Creative AutoGPT Use Cases to Make Money Online

What Is Langchain? 🧬

LangChain offers a suite of tools that makes it easier for developers to incorporate LLMs into their applications, regardless of the complexity or scale. It abstracts the complexities of LLMs, providing simple interfaces and robust backend support that manage the deployment, scaling, and fine-tuning of these models.

Moreover, LangChain is built with modularity in mind. It supports various language models, allowing developers to switch or integrate different models as per their application needs. This makes LangChain a flexible and powerful tool for developers working on natural language processing applications.

Apart from these, LangChain also offers capabilities for data preparation, training, and evaluation of models, thereby providing an end-to-end solution for integrating and managing LLMs in software applications.

💡 Recommended: 6 New AI Projects Based on LLMs and OpenAI

Langchain is another advanced AI technology that focuses more on reasoning and deduction and works with other tools and agents, making it adept at deducing steps in a plan (GitHub discussion). Combined with tools like Reflection, Langchain provides powerful features for users seeking high-quality reasoning and planning capabilities.

Image source: https://github.com/hwchase17/langchain/issues/2316

In fact, an experimental implementation using Langchain is available through AutoGPT, which uses the from_llm_and_tools method to provide details about agents and tools that are set up. The ChatOpenAI module from Langchain works as a wrapper around OpenAI’s ChatModel, such as GPT 3.5 or GPT 4.

👩💻 TLDR; While both Auto-GPT and Langchain are cutting-edge AI technologies, their core concepts differ in focus. Auto-GPT excels at automation and adapting to multi-step tasks, while Langchain thrives in reasoning and deduction when used with complementary tools and agents.

Applications and Use Cases

Natural Language Processing Tasks

Auto-GPT and LangChain are both designed to handle various natural language processing tasks. These platforms excel in interpreting, generating, and analyzing text. Both of them rely on the strengths of large language models (LLMs) to deliver effective solutions for NLP tasks🔍.

Sentiment Analysis

Tapping into the robust capabilities of these platforms, sentiment analysis becomes a breeze💨. Auto-GPT and LangChain can process large volumes of text, such as social media comments, product reviews, and customer feedback, to determine overall sentiment—be it positive, negative, or neutral.

Chatbots

Chatbots powered by Auto-GPT or LangChain can provide seamless conversational experiences, understanding user input and offering appropriate responses🤖.

While Auto-GPT primarily uses GPT-4 for its chatbot functionality, LangChain serves as an orchestration toolkit that combines various LLMs and utility packages to create advanced chatbots.

💡 Recommended: How I Created a High-Performance Extensible ChatGPT Chatbot with Python (Easy)

AI Content Generation

Content generation is another valuable use case for both Auto-GPT and LangChain. Utilizing their advanced NLP capabilities, these platforms can draft high-quality articles📝, blogs, and even podcast scripts, transforming simple user prompts into well-structured and engaging content.

If you’re interested in learning and mastering the most potent skill of our times, prompt engineering, check out the Finxter Academy course:

👉 Recommended: Mastering Prompt Engineering

Website and Subscription Management

Integrating Auto-GPT or LangChain into website and subscription management systems can considerably simplify the user experience. AI agents can assist in managing user accounts, handling subscription-related questions, and even providing personalized content suggestions based on user preferences🌐.

TLDR; Auto-GPT and LangChain provide developers with powerful tools for tackling a wide range of NLP tasks. With their unique strengths and versatile use cases, these platforms empower businesses to deliver better user experiences and automate various processes🚀.

💡 LangChain is better for a general purpose programming platform (e.g., Python) to access the power of LLMs whereas Auto-GPT is just an autonomous application built on GPT LLMs and Langchain.

Implementation

This section provides a concise overview of implementing Auto-GPT and LangChain, covering tools and libraries, API keys and tokens, and Docker integration.

We’ll touch on various components like OpenAI, databases, agents, features, and other relevant entities, ensuring the information is clear, concise, and presented effectively.

Using API Keys and Tokens 🔑

For seamless integration of Auto-GPT and LangChain, developers need to use API keys and tokens to authenticate their interactions with these models. Acquiring the necessary keys and tokens often involves signing up with their respective services like AgentGPT, registering products, generating keys, and following best practices for securely handling them.

Docker Integration 🐳

Docker can help streamline the development and deployment of Auto-GPT and LangChain projects. By containerizing and isolating the different components of the implementation, developers can ensure consistent and reproducible environments.

This can be especially helpful when working with vector databases, logs, and other resources that may require specific configurations. Always refer to the official Docker documentation for guidance and best practices.

Advantages and Limitations

Auto-GPT Benefits and Drawbacks

Auto-GPT is an innovative open-source autonomous AI agent 💡 based on the GPT-4 language model, automating the multi-step prompting process to achieve a user-defined goal such as:

- Research a book topic

- Create an online business

- Solve world hunger 😢

Self-Prompting: It combines task chaining with natural language to execute complex tasks through a simplified user experience.

One major advantage of Auto-GPT lies in its versatility in NLP applications. Being open-source, it offers a cost-effective option to build autonomous agents and AI content generators 😺.

However, Auto-GPT has its limitations. It tends to get caught in logic loops and often repeats steps ⚠️. It might take a wrong turn, going down “rabbit holes” and compromising its performance in certain tasks like deducing steps in a plan (source).

LangChain Benefits and Drawbacks

LangChain, on the other hand, focuses on providing a full-fledged library (pip install langchain) and provided reasoning abilities, which makes it effective for inferring steps in a plan when used in combination with frameworks like Reflection. It enables developers to build powerful language model-based applications and is seamlessly integrated with NLP libraries like GPT-Index, Haystack, and Hugging Face. (source)

As a standalone framework, LangChain is remarkably useful in creating applications in the domain of NLP. But LangChain’s primary focus on reasoning may limit its application in other areas of AI and autonomous agents. Also, the open-source status of LangChain is unclear, which might restrict its adoption compared to Auto-GPT.

Comparison with Similar Technologies

In this section, we will compare Auto-GPT and Langchain with other relevant technologies, such as GPT-3.5, GPT-4, Pinecone, and vector databases.

GPT-3.5 and GPT-4

GPT-3.5 and GPT-4 are powerful natural language models developed by OpenAI. Auto-GPT is a specific goal-directed use of GPT-4, while LangChain is an orchestration toolkit for gluing together various language models and utility packages. The implementation of Auto-GPT could have used LangChain but didn’t 🤔 (source).

💡 Recommended: Auto-GPT vs ChatGPT

Pinecone and Vector Databases

Pinecone is a vector database service that specializes in similarity search and personalization. It helps users find items that are closely related in a high-dimensional space.

Neither Auto-GPT nor Langchain directly relates to Pinecone; however, vector databases like Pinecone can complement Auto-GPT and Langchain by assisting in data management and retrieval tasks for these AI applications.

💡 Recommended: Auto-GPT: Command Line Arguments and Usage