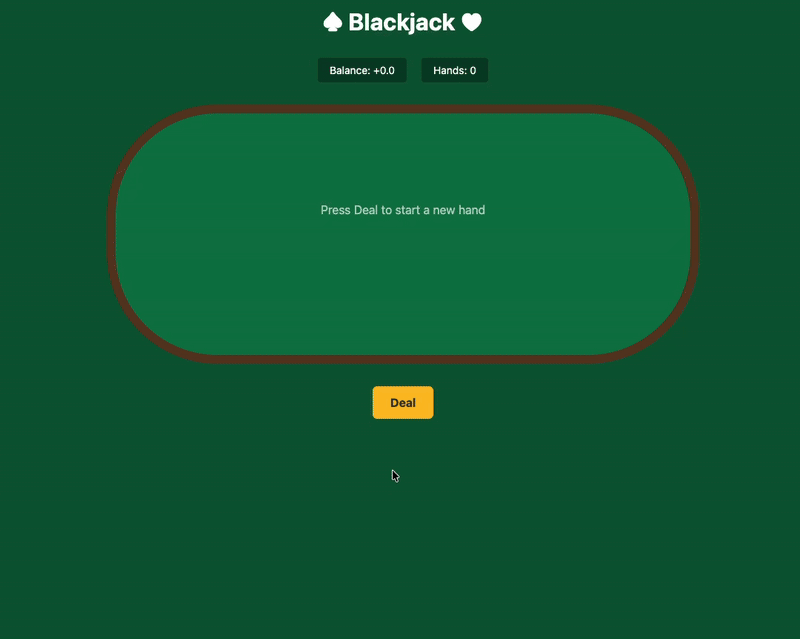

Stop Testing LLMs with Poetry: Use Blackjack Instead

🙏 Image and research source: Thomas Taylor (GitHub) If you want to see what an LLM is really good at (and where it still slips), don’t ask it to write a poem or generate code. Ask it to make the same small decision again and again under clear rules. That is why blackjack basic strategy … Read more