I just completed this fun puzzle creator GPT that tracks your Elo skills and challenges you with Python puzzles in the style of the Finxter app. It tracks your skills while you progress through subsequently more challenging Python puzzles.

🔗🧑💻 Try It Yourself: Coffee Break Python GPT (Finxter Original)

You can try it yourself; it’s fun!

Iteratively solving puzzles of varying difficulty works due to GPT-4 Turbo‘s larger context window of up to 128,000 tokens. I tried it before with GPT-4, but it couldn’t handle the longer and longer context window which led to weird behavior.

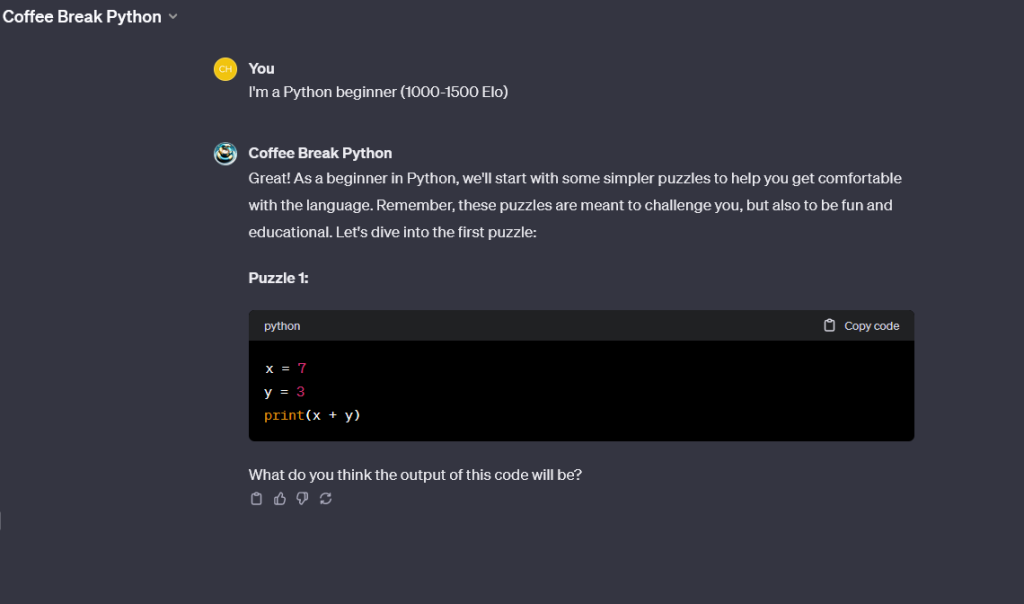

Let’s have a look at a beginner-level puzzle (Elo 1000):

And an intermediate-level puzzle (Elo 1500):

Finally, if you chose the “I’m an expert (Elo 2000+):

You can then solve the puzzles and get harder and harder puzzle as you increase your Elo rating.

🔗🧑💻 Try It Yourself: Coffee Break Python GPT (Finxter Original)

Now, here’s an interesting conversation I had that shows how incredible self-aware and intelligent GPT-4 Turbo is:

The image demonstrates GPT-4 Turbo’s ability to self-correct through reasoning within a single prompt response. 🤯 The initial response contains an error, but without external feedback, GPT-4 Turbo assesses the given Python code and corrects itself.

This self-correction process illustrates a few key aspects of the model’s intelligence:

- Analytical Reasoning: The model can analyze code and understand the logic behind it. When it identifies that the output does not match the expected result based on the code’s logic, it can deduce the correct answer.

- Self-Evaluation: The model exhibits the ability to evaluate its own responses for accuracy, indicating a form of introspection where it can judge its initial response against the logical framework of the Python language.

- Autonomous Correction: GPT-4 Turbo can autonomously correct its own mistakes within the context of the single prompt without needing external input, which is indicative of advanced problem-solving capabilities.

This self-reasoning ability is significant because it indicates a level of understanding that goes beyond simple pattern matching or retrieval of memorized facts; it shows an ability to engage with the content in a dynamic and logically consistent manner.

In psychology Today, the author M.D. Lewis argued that LLMs are not sentient because

“LLMs use complex statistical techniques to predict what word (or punctuation) should be generated next in a sentence, based on having been trained on a vast dataset that includes much of the internet and an enormous number of books. LLMs do not understand the content or context of what they are spewing out in a deep, meaningful sense. They mine their massive database of information and communicate it in ways that imitate human language and intelligence. The results vary from hugely impressive to sometimes ridiculously wrong and utterly confabulated.” — Psychology Today

Well, the conversation above proves this statement wrong. LLMs do understand the content or context of what they are spewing out — or GPT-4 Turbo wouldn’t have changed its mind through self-reasoning.

In the same Psychology Today article, psychiatrist Lewis gives some indications for AIs being sentient or self-aware. Without attempting to be highly controversial, I’d say that the interaction proves that the LLM checks the boxes:

Having said this, sentience, like self-awareness, are just words without strict agreed-upon definitions. I’d argue AIs today are already self-aware and, therefore, sentient to a degree. And the degree to how sentient they are is growing exponentially with AI training costs dropping 70% annually! 👇

🧑💻 Recommended: IQ Just Got 10,000x Cheaper: Declining Cost Curves in AI Training