A good solution to calculate Pearson’s r and the p-value, to report the significance of the correlation, in Python is scipy.stats.pearsonr(x, y). A nice overview of the results delivers pingouin’s pg.corr(x, y).

What is Pearson’s “r” Measure?

A statistical correlation with Pearson’s r measures the linear relationship between two numerical variables.

The correlation coefficient r tells us how the values lie on a descending or ascending line. r can take on values between 1 (positive correlation) and -1 (negative correlation) and 0 would be no correlation.

The prerequisite for the Pearson correlation is the normal distribution and metric data (e.g., measurements of height, distance, income, or age).

For categorical data you should use the Spearman Rho rank correlation.

However, the normal distribution is the least important prerequisite, and for larger datasets, parametric tests are robust so they can still be used. Larger datasets tend to be normally distributed but normality tests are sensitive to minor changes and reject the notion of normality on large datasets.

💡 Note: Be aware not to mix causality and correlation. Two variables that correlate do not necessarily have a causal relationship. It could be a third variable missing that explains the correlation or it is just by chance. This is called a spurious relationship.

Python Libraries to Calculate Correlation Coefficient “r”

We will calculate the correlation coefficient r with several packages on the iris dataset.

First, we load the necessary packages.

import pandas as pd import numpy as np import pingouin as pg import seaborn as sns import scipy as scipy

Pearson Correlation in Seaborn

Many packages have built-in datasets. You can import iris from Seaborn.

iris = sns.load_dataset('iris')

iris.head()Output:

With seaborn’s sns.heatmap() we can get a quick correlation matrix if we pass df.corr() into the function.

sns.heatmap(iris.corr())

Output:

This tells us that we have a high correlation between petal length and petal width, so we will test these variables separately.

First, we inspect the two variables with a seaborn sns.scatterplot() to visually determine a linear relationship.

sns.scatterplot(data=iris, x="petal_length", y="petal_width")

Output:

There is a clear linear relationship so we go on calculating our correlation coefficient.

Pearson Correlation in NumPy

NumPy will deliver the correlation coefficient Pearson’s r with np.corrcoef(x, y).

np.corrcoef(iris["petal_length"], iris["petal_width"])

Output:

Pearson Correlation in Pandas

Pandas also has a correlation function. With df.corr() you can get a correlation matrix for the whole dataframe. Or you can test the correlation between two variables with x.corr(y) like this:

iris["petal_length"].corr(iris["petal_width"])

Output:

💡 Note: NumPy and pandas do not deliver p-values which is important if you want to report the findings. The following two solutions are better for this.

Pearson Correlation in SciPy

With scipy.stats.pearsonsr(x, y) we receive r just as quick and a p-value.

scipy.stats.pearsonr(iris["petal_length"], iris["petal_width"])

SciPy delivers just two values, but these are important: the first is the correlation coefficient r and the second is the p-value that determines significance.

Pearson Correlation in Pingouin

My favorite solution is the statistical package pingouin because it delivers all values you would need for interpretation.

If you’re not familiar with pingouin check it out! It has great functions for complete test statistics.

pg.corr(iris["petal_length"], iris["petal_width"])

Output:

The output tells us the number of cases n, the coefficient r, the confidence intervals, the p-value, the Bayes factor, and the power.

💡 The power tells us the probability of detecting a true and strong relationship between variables. If the power is high, we are likely to detect a true effect.

Interpretation:

The most important values are the correlation coefficient r and the p-value. Pingouin also delivers some more useful test statistics.

If p < 0.05 we assume a significant test result.

r is 0.96 which is a highly positive correlation, when 1 is the maximum and a perfect correlation.

Based on r, we can determine the effect size which tells us the strength of the relationship by interpreting r after Cohen’s effect size interpretation. There are also other interpretations for the effect size but Cohen’s is widely used.

After Cohen, a value of r around 0.1 to 0.3 shows a weak relationship, from 0.3 on would be an average effect and from 0.5 upwards will be a strong effect. With r = 0.96 we interpret a strong relationship.

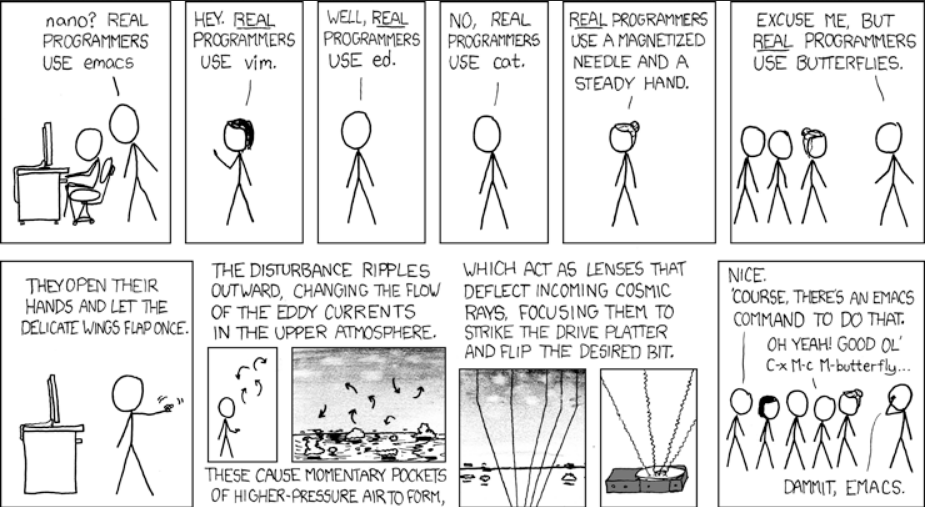

Programmer Humor