import pandas as pd

df = pd.read_csv('my_file.csv')

df.to_parquet('my_file.parquet')Problem Formulation

Given a CSV file 'my_file.csv'. How to convert the file to a Parquet file named 'my_file.parquet'?

💡 Info: Apache Parquet is an open-source, column-oriented data file format designed for efficient data storage and retrieval using data compression and encoding schemes to handle complex data in bulk. Parquet is available in multiple languages including Java, C++, and Python.

Here’s an example file format:

By far the most Pythonic solution to convert CSV to Parquet file formats is this:

CSV to Parquet in 6 Easy Steps Using Pandas

Here’s a step-by-step approach to reading a CSV and converting its contents to a Parquet file using the Pandas library:

- Step 1: Run

pip install pandasif the module is not already installed in your environment. - Step 2: Run

pip install pyarrowto installpyarrowmodule - Step 3: Run

pip install fastparquetto install thefastparquetmodule - Step 4: import pandas using

import pandas as pd - Step 5: Read the CSV file into a DataFrame using

df = pd.read_csv('my_file.csv'). - Step 6: Write the Parquet file using

df.to_parquet('my_file.parquet')

The code snippet to convert a CSV file to a Parquet file is quite simple (steps 4-6):

import pandas as pd

df = pd.read_csv('my_file.csv')

df.to_parquet('my_file.parquet')If you put this code into a Python file csv_to_parquet.py and run it, you’ll get the following folder structure containing the converted output file my_file.parquet:

The file output is pretty unreadable—if you open the Parquet in Notepad, it looks like so:

That’s because it uses more advanced compression techniques and you should use it only programmatically from within the Hadoop framework, for example.

CSV to Parquet Using PyArrow

Internally, Pandas’ to_parquet() uses the pyarrow module. You can do the conversion from CSV to Parquet directly in pyarrow usinq parquet.write_table(). This removes one level of indirection, so it’s slightly more efficient.

Like so:

from pyarrow import csv, parquet

from datetime import datetime

table = csv.read_csv('my_file.csv')

parquet.write_table(table, 'my_file.parquet')This is the fastest approach according to a mini-experiment:

More Python CSV Conversions

If you want to convert the Parquet back to a CSV, feel free to check out this particular tutorial on the Finxter blog.

🐍 Learn More: I have compiled an “ultimate guide” on the Finxter blog that shows you the best method, respectively, to convert a CSV file to JSON, Excel, dictionary, Parquet, list, list of lists, list of tuples, text file, DataFrame, XML, NumPy array, and list of dictionaries.

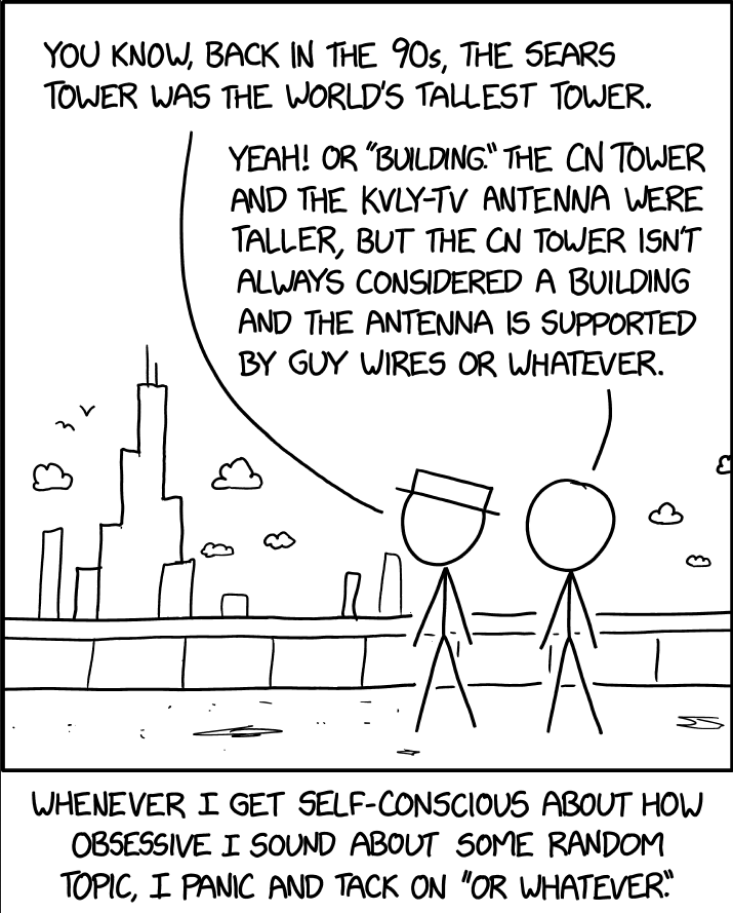

Okay, let’s finish this up with some humor, shall we?

Nerd Humor